Why Identity Security Is Critical in the Era of AI Agents

Learn how integration gaps and token-based access create security risks with AI agents.

Vaibhavi Kadam

AI agents are increasingly operating around Identity and Access Management (IAM).

As a result, organizations are frequently seeing vulnerabilities associated with AI Agents, such as prompt injections and data leakage. These are real problems, but for security leaders and the teams building on top of AI, there's a more immediate issue: the integrations that AI agents run through and whether IAM is actually governing them.

Spoiler: In most organizations, it isn't.

In this post, we walk through why this is such a critical issue, what the common points of integration failure look like, and how to address them.

How do AI Agents Actually Operate?

Traditional IAM was designed around a human authentication event where a user presents credentials, MFA fires, a session is created, and access is granted. This is all bound, auditable, and familiar.

However, AI agents don't follow the same pattern. They operate through service identities and delegated credentials.

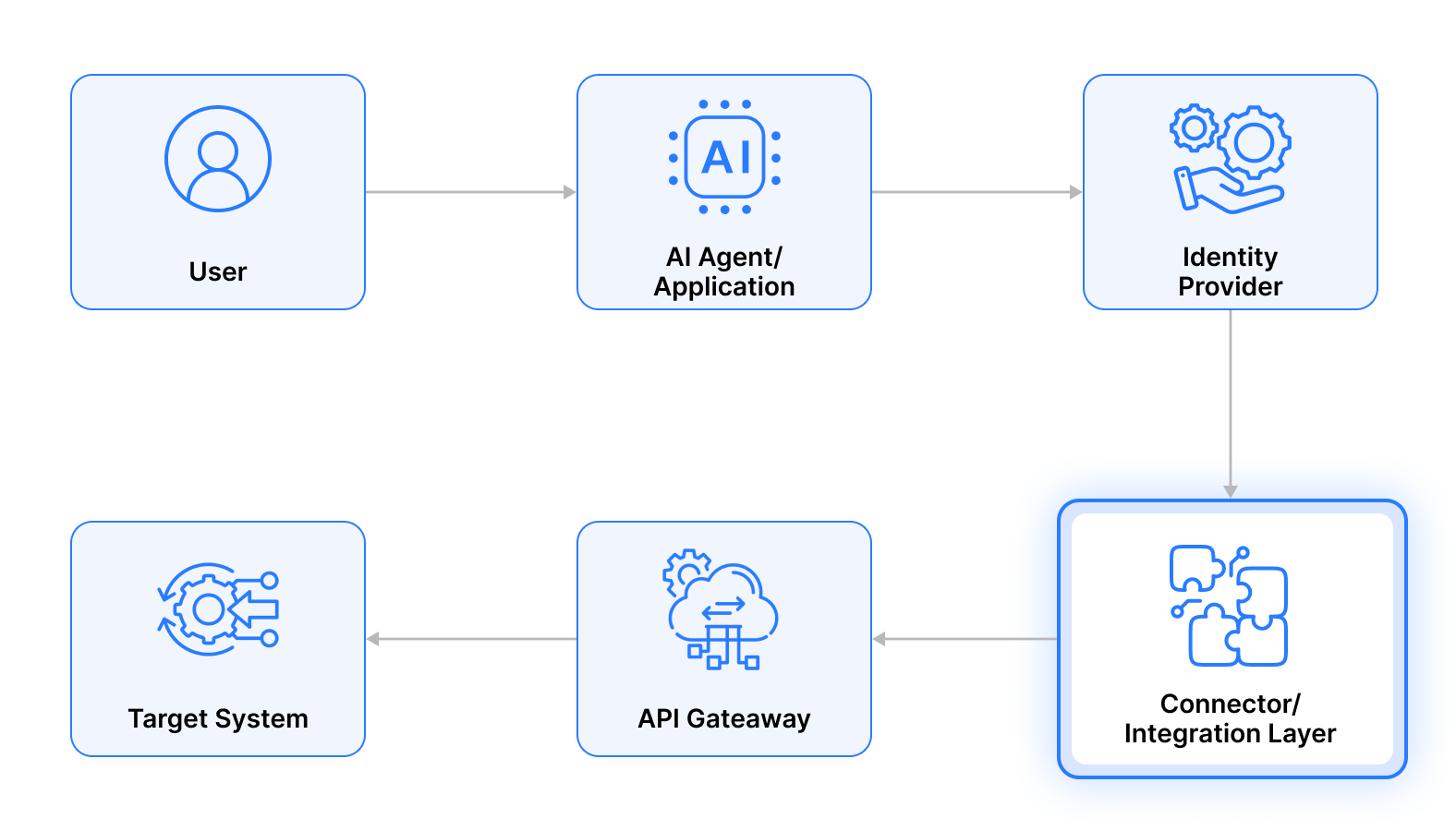

A typical agent interaction looks more like this:

- An OAuth 2.0 client credentials grant may issue a token to the agent (though other authentication mechanisms like API keys, service accounts, or workload identity federation are also common)

- The agent calls multiple downstream APIs using that token

- Some calls are made on behalf of a user via token exchange

- The token may be cached and reused across sessions

- Most importantly, there's no human re-authentication in the loop

The identity decisions in that flow aren't happening at login. They're happening inside connectors, API gateways, and orchestration layers, all places where IAM policy often has limited reach.

So, if your enforcement model assumes a session boundary that agents don't respect, you have a gap.

The Most Common Integration Failure Points

The failure patterns are consistent enough that if you've worked across a few enterprise environments, you've seen all of them.

- Long-lived credentials embedded in connectors:

An agent is issued an OAuth client secret or a static API key. That credential gets stored in a connector configuration, sometimes hardcoded, sometimes in a secrets manager that nobody rotates.

When something changes, a user's access is revoked, an endpoint is compromised, anomalous behavior is flagged, and the connector doesn’t re-evaluate access and continues making calls, resulting in silent, unrevoked access.

IAM has the right policy, yet the connector bypasses it entirely.

- Delegated tokens that outlive their authorization:

Many integrations validate access scope (for example, in OAuth-based flows) at token issuance and never again. If a user's privileges change after an agent has been granted delegated access via OAuth token exchange, that agent session can continue uninterrupted.

The access decision was made once, at a point in time that no longer reflects reality.

- Orchestration layers are becoming shadow identity brokers:

AI agents frequently run through middleware, and these systems aggregate credentials, cache tokens, and distribute requests across services.

Over time, they accumulate permissions that nobody has a clean inventory of. They sit outside centralized IAM visibility. The logs exist, but you can't reconstruct the full identity chain from them, which matters a lot when you're in incident response at an odd hour.

Where Enforcement Now Needs to Live

The old perimeter model put enforcement at the edge: user authenticates, gets in, operates within a bounded system. That's gone for most modern architectures, and AI agents accelerate the erosion.

The real enforcement chain now looks like this:

IAM needs to operate at every link. That means:

- Token issuance via short-lived credentials or federated identity

- Continuous token validation at the API gateway layer, not just at issuance

- Scope enforcement that reflects current entitlements, not snapshot entitlements from when the token was first issued

- Revocation that propagates, so pulling a user's access actually pulls the agent's delegated access too

- Logging that captures the full chain: which identity, acting as whom, calling what, with what scope, with what outcome

If any of those links are missing, you have inconsistent enforcement. And inconsistency is the exploitable condition. Tokens don’t forget. IAM policies assume they do.

What Strong IAM Integration Looks Like

The organizations handling this well aren't necessarily using the most sophisticated tooling. They've made a set of deliberate architectural decisions:

Every agent is a first-class identity. It has an entry in the IAM system, an owner, a defined purpose, and lifecycle controls. When the agent is decommissioned, the identity is decommissioned with it. This sounds obvious, but in practice, most environments have a graveyard of orphaned service accounts and API keys that nobody can confidently say are inactive.

Static secrets are treated as technical debt. Client credentials and API keys are replaced with short-lived tokens wherever possible. Where token-based authentication is in play, token lifetimes are short, and refresh logic is explicit. Where workload identity federation is available (and it is, in AWS, GCP, and Azure), it's used.

Conditional access logic must extend to agents: If a risk signal detects anomalous call patterns, a user’s device posture changes, or a threat intelligence feed flags an IP address, that signal should be able to dynamically constrain or terminate agent access. This is only possible when agents and their tokens are visible to the systems generating those signals.

The identity chain is traceable end-to-end. You should be able to answer: which user authorized this agent, which connector did it use, which systems did it touch, what scopes were in play, and what did it do. If reconstructing that chain takes more than a few minutes, the logging and correlation aren't where they need to be.

A Practical Self-Assessment

Before assuming your current IAM posture covers your agent footprint, run through the following:

- Can you enumerate every AI-driven integration pathway in your environment right now?

- Do you know which identity (human or non-human) each integration runs under?

- If you needed to revoke an agent's access immediately, could you do it centrally without manually touching each downstream system?

- Are delegated tokens re-evaluated when the delegating user's risk or entitlements change?

- Can you reconstruct a full identity chain (user to agent to system to action) from your current logs during an incident?

If the answer to any of these is "not confidently," the gap is almost certainly in integration governance. The policies are probably fine, but the enforcement just isn't reaching the places that matter now.

Conclusion

It's worth noting that AI agents are accelerating this problem, but they didn't create it. Long-lived credentials, opaque orchestration layers, and IAM that stops at the login event were gaps before agents arrived. Agents just multiply the consequences.

The teams that will handle this well are the ones who treat integration architecture as an identity governance problem from the start, not as a security review that happens after deployment.

The connector is the new enforcement boundary. The token is the new credential. The agent is a new identity class that deserves the same governance rigor as any human user.

Get that right, and scaling AI becomes a lot less scary.

Metron works with security and product teams to assess integration pathways, map non-human identity exposure, and design IAM architectures built for the way agents actually operate. If this is a gap you're looking at, we're happy to think through it with you — connect@metronlabs.com